Bridging Real-Time Rendering and Captured Reality

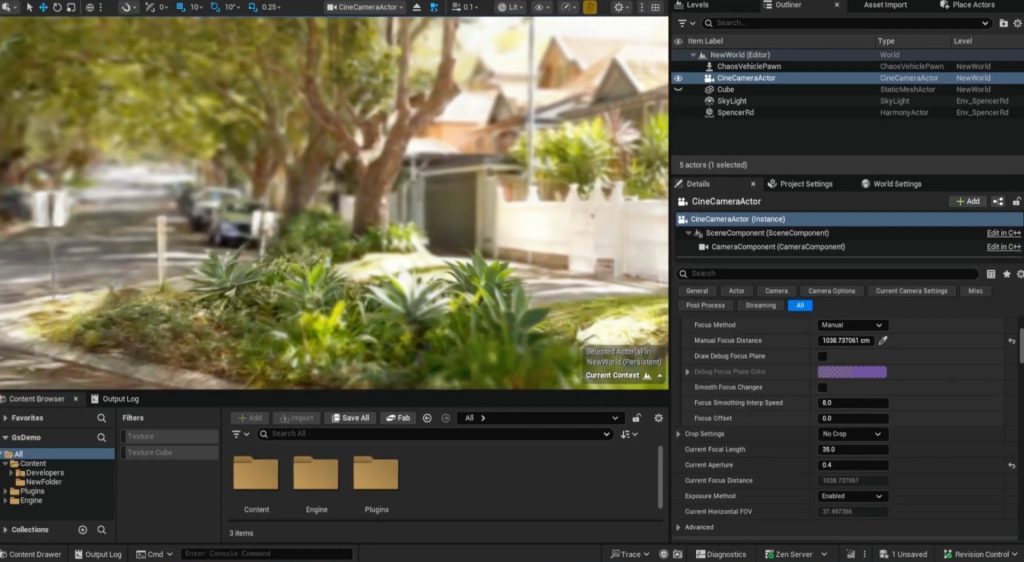

Harmony is a self-initiated R&D project exploring how Gaussian Splatting can be integrated into Unreal Engine, driven by a personal interest in bridging captured reality with real-time rendering.

It focuses on enabling high-fidelity environments to be combined seamlessly with traditional 3D content, without relying on fully modelled scenes.

About The Project

Harmony was developed to address a gap between emerging Gaussian Splatting techniques and production-ready real-time pipelines. While splats provide highly efficient, photoreal scene reconstruction, integrating them into established engines like Unreal introduces challenges around rendering order, lighting, transparency, and compositing.

The project focuses on creating a practical, production-friendly solution that allows splat-based environments to coexist with Unreal’s standard rendering pipeline. This includes handling depth, tone mapping, and transparency in a way that maintains visual consistency while preserving performance.

The result is a system that enables hybrid scenes, combining traditional geometry with captured environments, opening up new possibilities for automotive, virtual production, and immersive experiences.

Key Features

Hybrid Rendering Pipeline

Combines Gaussian splats with traditional Unreal geometry, enabling both to coexist within a single real-time scene.

High-Fidelity Environment Rendering

Delivers photoreal environments using Gaussian splatting, preserving fine detail and lighting information from captured data.

Custom Compositing & Tonemapping

Implements a tailored rendering pipeline to correctly blend splats with Unreal’s lighting, transparency, and post-processing.

Real-Time Performance Optimisation

Uses GPU-based sorting, culling, and caching strategies to maintain performance while rendering large splat datasets.

Process & Technical Insights

Process & Technical Insights

The core challenge in Harmony is not rendering splats in isolation, but integrating them into Unreal’s existing rendering pipeline without breaking visual consistency. Gaussian splatting naturally operates outside of traditional rasterisation assumptions, particularly around depth, transparency, and lighting, which introduces conflicts when combined with engine-native geometry.

To address this, the system renders splats into intermediate buffers and reintroduces them through a controlled compositing stage, ensuring correct interaction with tone mapping and post-processing. Depth approximation techniques are used to maintain believable occlusion, while foreground and background separation helps mitigate common artefacts around transparency and sorting.

Performance is driven by GPU-based workflows, including dynamic sorting and aggressive culling, with additional optimisations to avoid unnecessary recomputation when the camera is static. The result is a pipeline that balances visual fidelity with real-time constraints, making Gaussian splatting viable for production use rather than just experimentation.

Got questions?

Feel free to reach out